AI Designed to Extract Insights From Your

Organization's Content

insights, create summaries, and perform actions via a simple, intuitive interface built into users’ workflows.

Reduce Security Risks

Improve Insight Accuracy

Elevate Workflows

Increase the speed at which work can be completed with AI built into existing workflows.

Powerful Intelligence Features

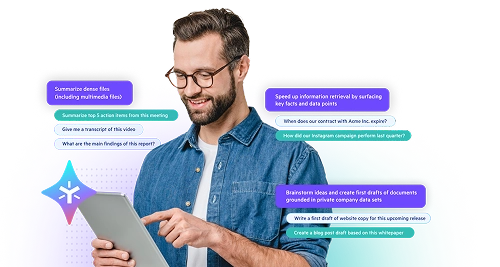

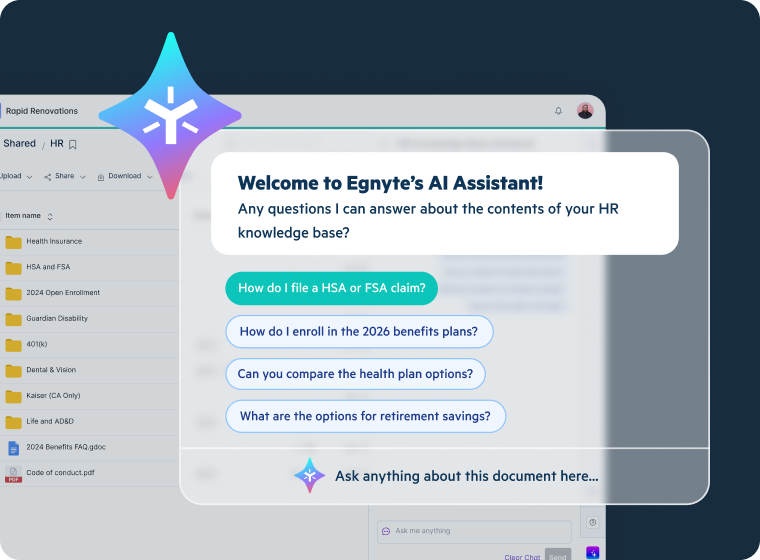

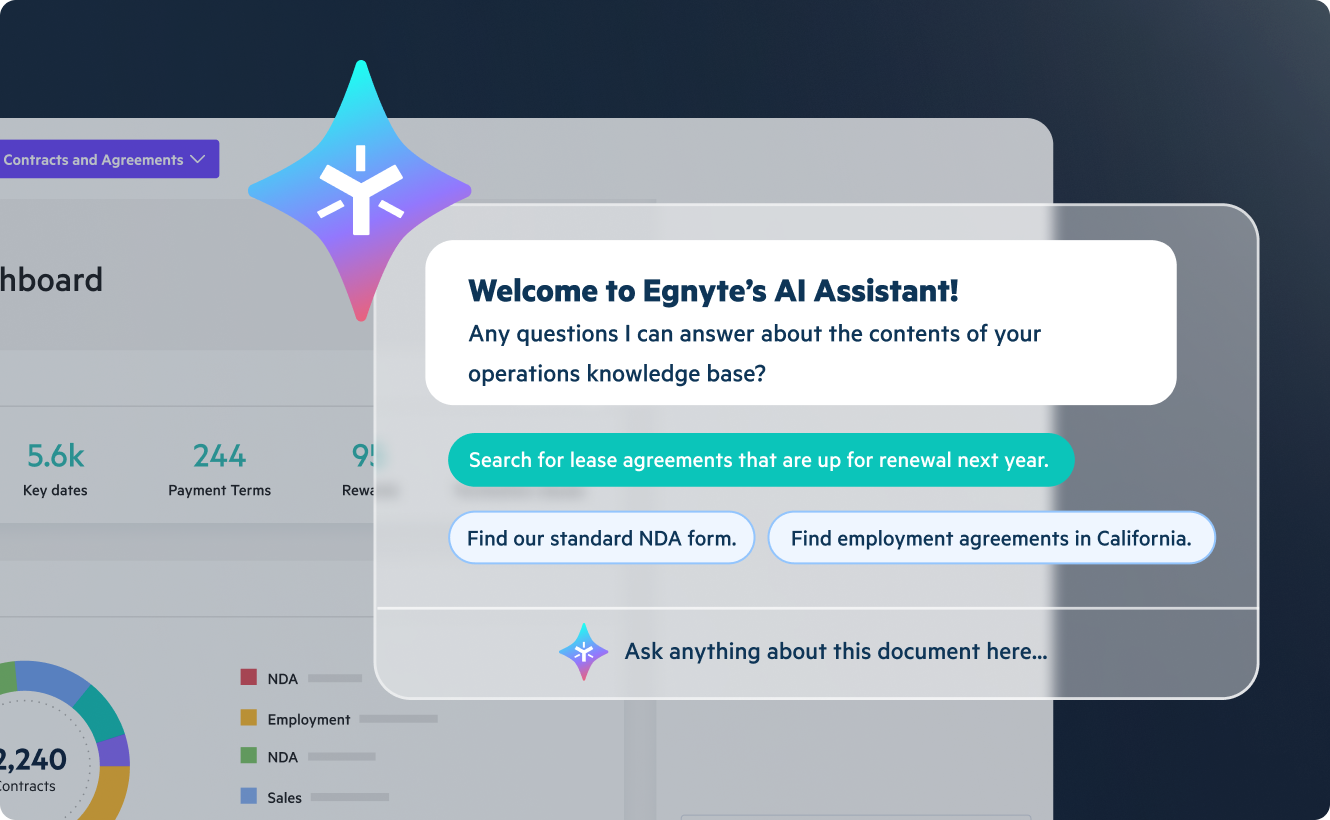

Conversational AI With AI Assistant

- Save time and reduce workload with instant document summaries

- Drive informed decisions with fast insights generated from your content

- Craft better prompts with one-click Prompt Wizard

- Boost team efficiency with shared prompt libraries and custom prompts you can save

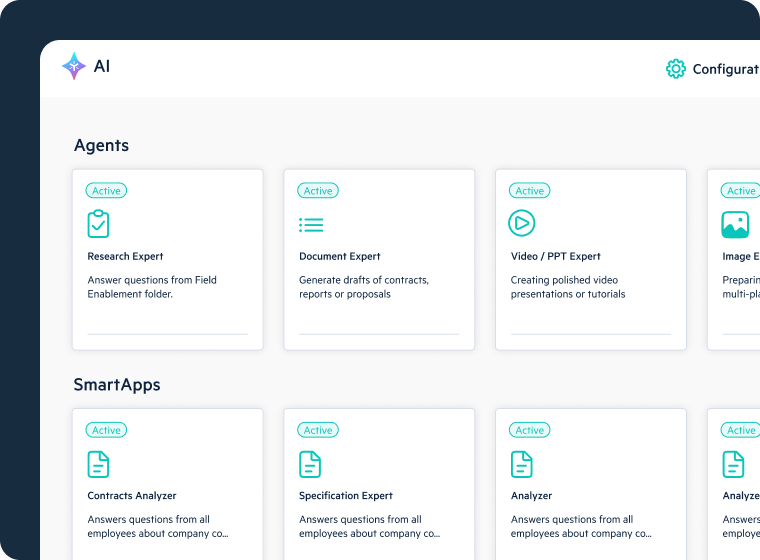

AI Agents

- Easily customize AI agents to your workflows

- Automate workflows for role-specific tasks with AI agents

- Enhance quality, speed, and strategic impact with project-specific agents—Specifications Analyst and Building Code Analyst

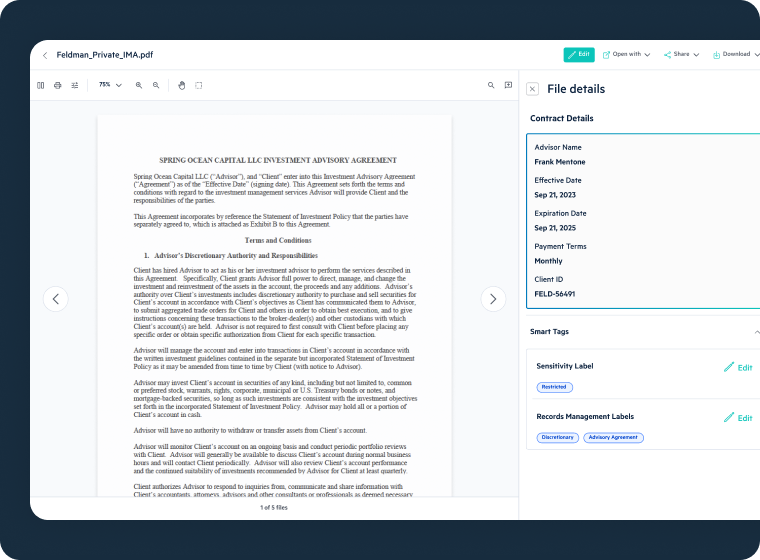

- Extract contract clauses instantly and simplify legal language with Contract Analyst

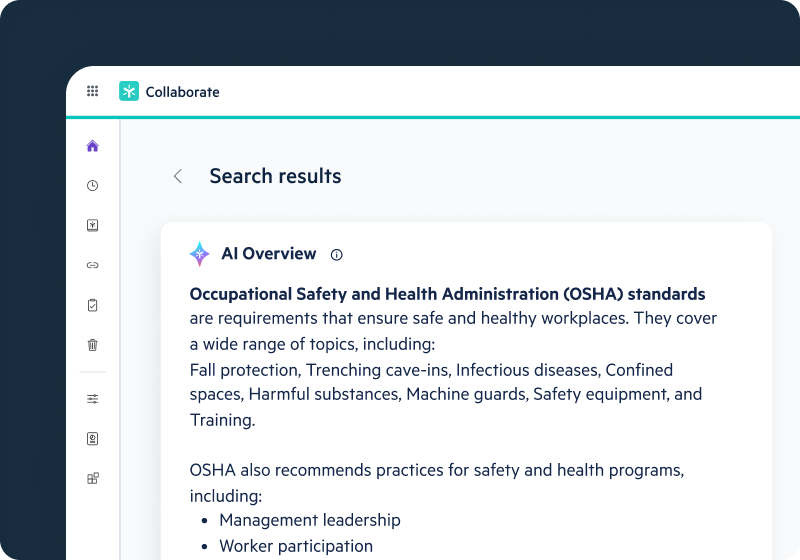

AI Search

- Generate AI-driven summaries from top-ranked results

- Discover content with unified search across recent files, bookmarks, and folders

- Upload an image to find visually similar images easily

- Access photos tagged by geolocation within a designated area and other image metadata

- Transcribe AV files for easy search and enhanced content analysis

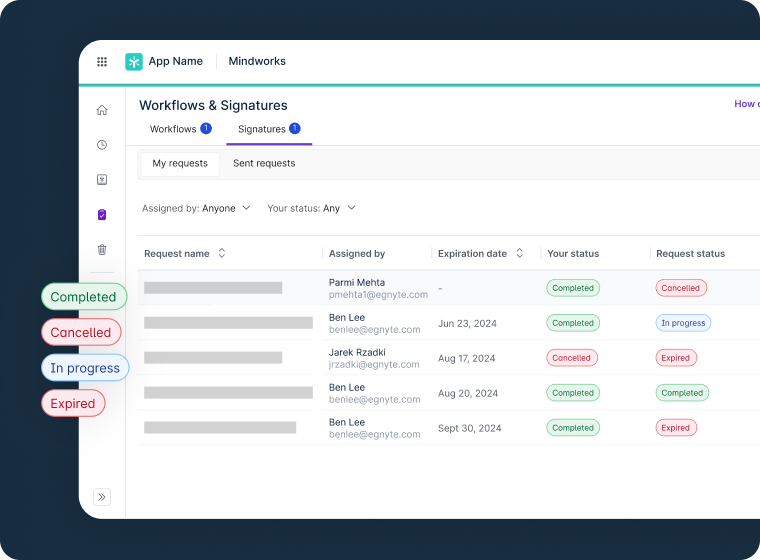

AI Workflow

- Automate work processes by combining AI with workflows

- Initiate a new workflow based on AI-applied metadata

- Trigger an AI agent action as part of a workflow step

Extractors

- Use industry-specific AI/ML to extract metadata from images and documents

- Integrate exported metadata via API in JSON or CSV

- Enhance organization and workflows with customized reports to boost productivity

Smart Tags

- Scan and label content for better organization and protection

- Auto-tag documents and images for improved discovery and search

- Generate labels like Confidential or Legal to improve organization and detection by DLP and CASB systems

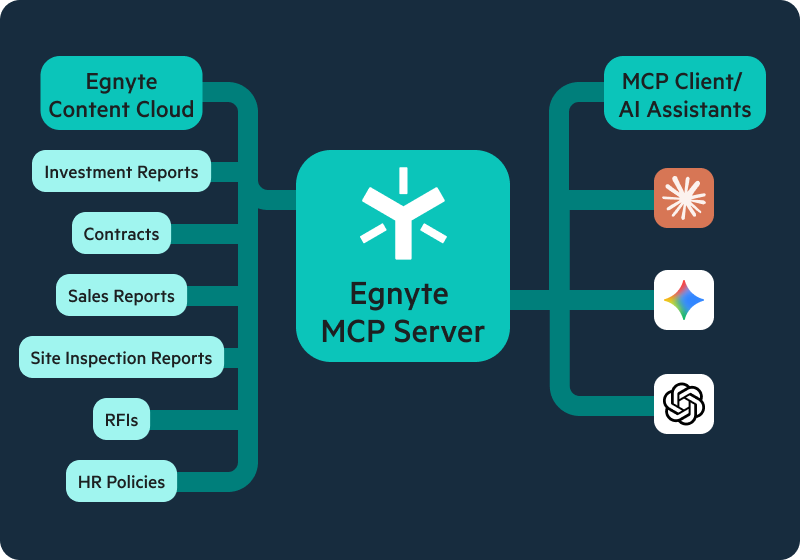

Egnyte MCP Server

- Unlock AI insights while keeping sensitive content secure and compliant

- Connect assistants like ChatGPT, Claude, and AI Assistant to your enterprise data

- Deploy on your terms with open-source or fully managed options

- Ensure AI access respects your existing permissions and AI tools only see authorized content

Hear From Our Customers

See AI Assistant in Action

Boost productivity and improve decision-making with generative AI features that:

- Instantly summarize files, ask questions in natural language, and generate new content

- Share prompt libraries across your teams and create custom prompts to enhance productivity

- Discover and extract insights from multi-modal content like images, designs, and audio/video

You Might Also be Interested In

FAQs

A critical requirement for enterprise AI search is that results must respect the same permission boundaries as direct file access, users should never discover content through search that they are not authorized to view directly. Permission-aware AI search is built on the platform's existing granular permission model, meaning results are automatically scoped to each user's individual access rights in real time. This is a fundamental architectural difference from bolt-on AI search tools that index content independently of the underlying content management system, which can inadvertently surface unauthorized content. For organizations handling sensitive client data, health records, or defense information, permission-aware AI search is a compliance requirement, not a feature.

An enterprise AI assistant must operate within the same governance boundaries as any other user, it should only read, summarize, or act on content the user it serves is already authorized to access. A well-governed AI Copilot is grounded in the organization's own content and enforces granular permissions at every interaction, ensuring it never surfaces information from files outside a user's permitted scope. Responses should be traceable, with the assistant citing source documents to allow verification and provide a clear audit trail for compliance teams. Because enterprise AI runs within the organization's private environment, no content is transmitted to external model providers as training data, making it suitable for regulated content including PHI, CUI, and confidential financial data.

Deploying an AI assistant without an underlying data governance framework creates compliance risks distinct from, and often more serious than, the risks posed by the AI tool itself. Without permission enforcement, an AI assistant may surface regulated data to unauthorized users, creating a de facto data breach without malicious intent. AI tools that retain prompts as training data can embed confidential enterprise content in public models, violating data residency and contractual obligations. Without audit trails for AI interactions, organizations cannot demonstrate to regulators that access to sensitive content was appropriately controlled, an increasingly required standard under GDPR, HIPAA, CMMC, and emerging AI governance frameworks.

Enterprise content is rarely stored in a single system, most organizations have files distributed across cloud storage, on-premises file servers, and business applications. An enterprise AI search platform should connect to primary cloud storage providers, on-premises servers, and key business applications such as Salesforce, Microsoft 365, and Google Workspace. Equally important is that each connector honours the source system's permission model, the AI search index should not flatten access controls that exist in original repositories. Organizations should also assess whether the platform supports multimodal content, including audio and video transcription and image metadata extraction, which significantly expands the body of content available for AI-assisted discovery.

When employees use consumer AI tools to query or summarize sensitive documents, they typically upload content to external services with no guarantee of confidentiality, data residency compliance, or freedom from use as training data. This creates invisible risk, shadow AI, where regulated information including PII, PHI, and proprietary intellectual property leaves the organization's control without triggering any access control or DLP alert. AI safeguard capabilities mitigate this by detecting and classifying sensitive content and applying policies that restrict sharing to external or unapproved AI services. Providing employees with a governed, permission-aware AI assistant embedded in their existing content workflows removes the productivity incentive to use consumer tools for document queries.

As AI becomes embedded in document workflows, compliance teams need the same auditability from AI-driven processes as from human-driven ones, including records of what actions were taken, by whom or by which agent, on which content, and when. An AI workflow platform built for regulated environments should provide end-to-end audit trails capturing AI-triggered actions alongside human actions, making the full workflow history available for compliance review. Policy-based controls should define which AI-triggered actions are permitted on which content categories, providing guardrails that prevent AI workflows from operating outside approved boundaries. For organizations subject to CMMC, HIPAA, GDPR, or SOC 2, this level of AI workflow auditability is increasingly expected as part of the compliance evidence package.

A successful departmental AI agent pilot begins with a clearly scoped, high-frequency task where time savings are measurable and risk of error is manageable, document summarization, metadata tagging, contract clause extraction, or compliance checklist completion are common starting points. A no-code agent builder allows business teams, not just IT, to configure role-specific agents tailored to their workflows without requiring software development resources. Pre-built agents for AEC and legal teams provide ready-to-deploy starting points, reducing the time from pilot decision to first result. Because agents operate within the platform's existing permission model, sensitive content is never exposed beyond each user's authorized scope, critical for regulated departments piloting AI on live business data.